Here is an uncomfortable truth: most enterprise teams deploying LLM-powered chatbots today do not have a real testing practice. This is not because they are careless. The field is new, and the playbook is still forming.

Traditional QA was built for deterministic software. The same input gives the same output. You write a test, it passes or fails, and you ship. This way of thinking is deeply rooted in most teams.

Many businesses already moved away from this model once. They went from rule-based systems to deterministic AI. Think of intent classifiers or NLP-powered bots. These systems brought new testing challenges. Teams had to learn how to test classifier engines. They adapted and built new practices and tools. That is where QBox, our previous company, came from. Many teams built solid QA processes for that generation of bots. Many still run them today. After Qbox’s success and acquisition, we chose to focus on the new LLM problem.

The move to LLM-powered and agent-based systems is a much bigger shift. These systems do not follow fixed intents. They reason, retrieve, and decide. The same input can lead to different outputs. The system changes when you update the knowledge base, switch the model, or tweak a prompt. Failures are not always clear. Sometimes the bot is confidently wrong. On voice channels, the impact is stronger. Users cannot re-read or copy. Problems hit faster.

This calls for new ways of working, new tools, and new frameworks. It also requires accepting that best practices are still being defined. LLMs do not behave like earlier systems. There is still no clear agreement on how to test them.

At Hangar5, we work closely with enterprise teams building AI chat and voice bots. The question we hear most is simple: how do we know if this actually works? We have been building our own answers. We will share them here. We will also be honest. This space is still uncertain. We can only share what we have learned and what seems to work. Models, tools, and practices are still evolving.

That is the spirit of this series.

The Testing Gap Is Bigger Than You Think

Think about how most teams “test” an LLM chatbot today. A product manager tries a few scenarios. The team shares a staging link. A few colleagues try it. Someone in support gives it a go. It answers demo questions well. Then it ships.

That is not testing. That is hoping.

The cost is not always immediate. LLM bots often fail slowly. A wrong answer here. A broken conversation there. A poor response in an edge case that appears at scale. By the time you notice a pattern, thousands of conversations may already be affected.

Voice bots make this worse. In chat, users can re-read and try again. In voice, they cannot. When it fails, the user often tries to reach a human straight away. They may also share the bad experience with others. Quality matters more, and feedback comes slower.

Now consider scale. If a human agent makes mistakes for a few days, how many customers are affected? Maybe fifty or a hundred. That is manageable. You correct it and move on.

An AI agent behaves differently. It does not handle fifty conversations. It can handle fifty thousand. Unlike a human, it does not get tired or question itself. It repeats the same mistake with the same confidence every time. No hesitation. No correction. No sense that something is wrong.

This changes how we think about testing. It is not only about quality. It is about the scale of impact. A small issue can affect thousands of customers. The only protection is your ability to catch it before release, or very soon after.

There Are at Least 15 Ways to Test These Systems

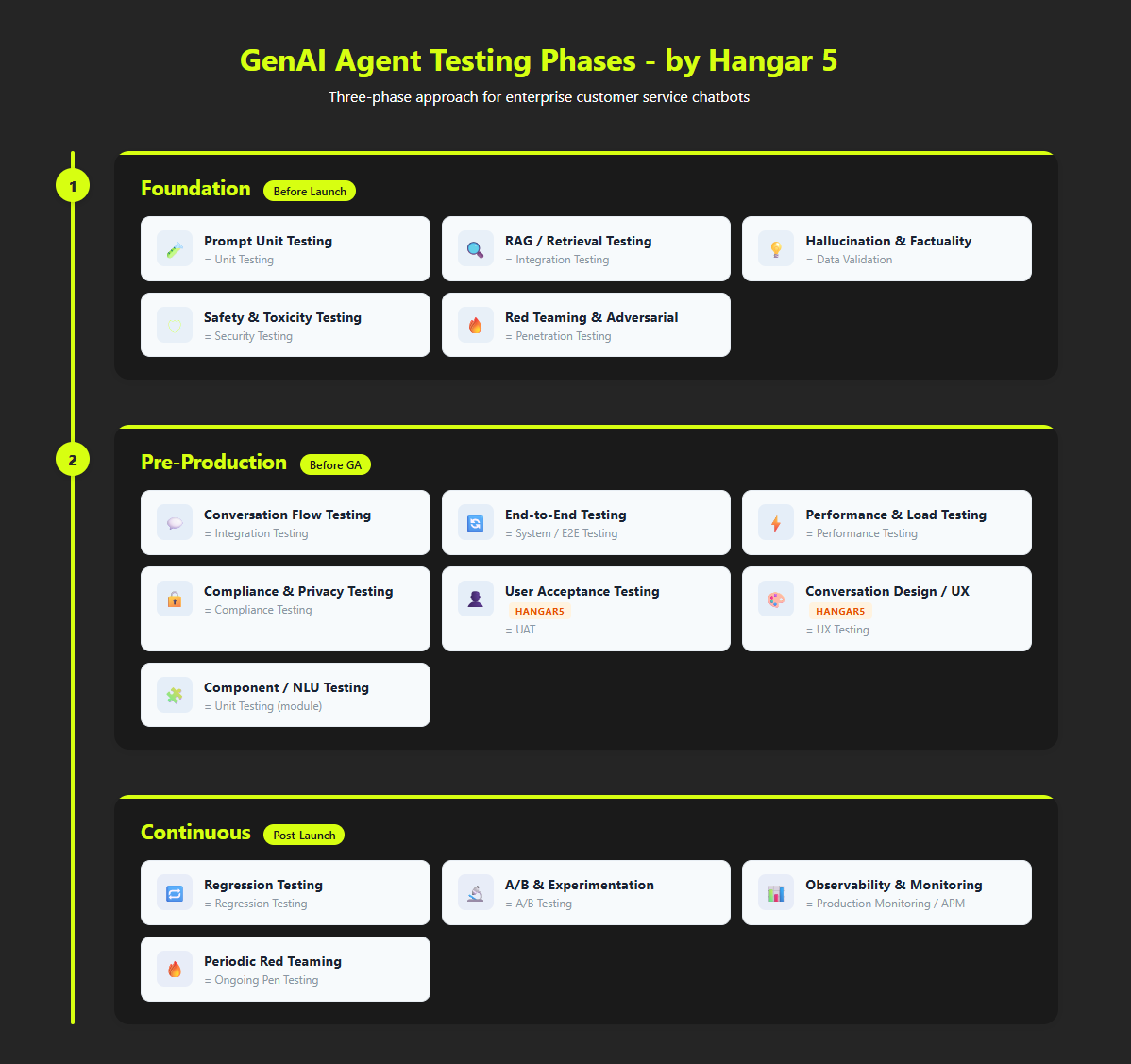

From working with many enterprise GenAI projects, we have identified 15 types of testing for LLM chat and voice bots. These cover areas such as prompt evaluation, jailbreak risks, and performance over time.

These tests fall into three phases:

Phase 1: Foundation (before any demo)

These tests check if the bot is safe and coherent enough to show. They include prompt testing, retrieval quality, hallucination checks, safety scans, and adversarial testing.

Phase 2: Pre-Production (before real users)

These tests check if the bot can handle real use. They include conversation flow testing, end-to-end testing, performance, compliance, user acceptance, and conversation design.

Phase 3: Continuous (after launch)

These tests keep the bot stable over time. They include regression testing, A/B testing, and production monitoring. The bot you launch is not the same after 90 days. Most teams cannot tell if it improved or got worse.

Fifteen types is a long list. We are not saying otherwise.

You Do Not Need All 15. You Need the Right Ones.

The most common mistake is not doing no testing. It is trying to do everything at once. Teams get overwhelmed and go back to manual checks.

The right approach depends on your stage. A team building its first bot faces different risks from a team running several bots across channels. Some tests matter early. Others are only useful later. Doing them too soon creates noise.

We map the 15 types across three levels:

- Starter: First project, limited tools, mostly manual. Four tests are essential. The rest can wait.

- Intermediate: One or two bots live. You know what can break. Eight tests become important. You start automating.

- Advanced: Mature setup with bots across channels. All 15 tests matter. Most should run continuously.

This is the structure of the series.

How to Use This Series

Start with Post 2 if this is your first chatbot, or if testing is mostly manual. We cover the four tests you need before launch and how to run them without special tools.

Read Post 3 if you already have a live bot and want to know if your setup is enough. It explains what becomes critical once you are in production.

Go to Post 4 if you run multiple bots and want a mature testing approach. It also highlights where advanced teams still struggle.

We will stay specific. We will name each test, explain what it catches, and where tools are still weak. Some advice may change over time. That is expected.