There’s a common assumption in teams shipping conversational AI: that the cost of a bad response is low. The assistant says something wrong, the user notices, they try again or contact support. It’s a nuisance, not a crisis.

That assumption holds up poorly under scrutiny, and in some industries, it doesn’t hold at all.

The compounding nature of trust damage

AI assistants are often deployed specifically because they’re meant to be trusted. A customer service bot, a financial guidance assistant, a medical information tool: in each case, the product works because users believe what it tells them.

When that trust breaks, it rarely breaks quietly. Users who encounter a confident, fluent hallucination don’t usually think “the AI made a mistake.” They think “this company told me something wrong.” The reputational damage attaches to the brand, not the model.

And unlike a human error, AI errors at scale are systematic. If your assistant is wrong about something in a particular conversational context, it will be wrong every time that context arises, with every user who encounters it.

The categories of cost

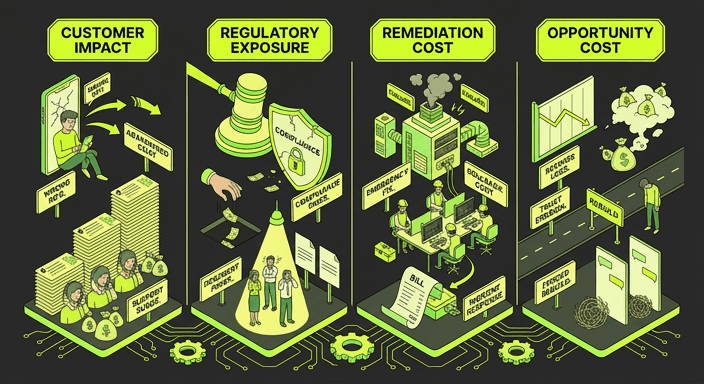

When an AI assistant fails without proper QA in place, the costs fall into several buckets:

Direct customer impact

Users who receive wrong information, inappropriate responses, or confusing non-answers abandon the channel. Escalation rates rise. Support costs increase. In some cases, particularly in health, financial services, or legal, the user acts on wrong information with real-world consequences.

Regulatory exposure

In regulated industries, the question isn’t just “did something go wrong?” but “could you have known?” Regulators increasingly expect AI deployments to come with documented testing evidence. Teams that documented the risk and shipped anyway are in a significantly worse position than teams that tested thoroughly and can prove it.

Internal cost of remediation

Finding a failure in production is vastly more expensive than finding it before release. Incidents require investigation, stakeholder communication, often an emergency fix cycle, and sometimes a rollback. The engineering and operational cost dwarfs what a proper testing programme would have cost.

Opportunity cost

An AI assistant that users don’t trust gets abandoned. The self-service deflection you expected doesn’t materialise. The cost savings and productivity gains projected in the business case evaporate. Teams that shipped quickly without testing often find themselves rebuilding, except now they’re doing it under pressure, with a damaged reputation, and without the user trust that makes AI assistants useful.

The “tested what we could” trap

Most teams don’t skip testing entirely. They test what’s practical: a set of representative prompts, a few manual conversation walkthroughs, maybe some red-teaming sessions. Then they document the gaps, note that full coverage wasn’t possible, and ship.

Documenting the risk doesn’t mitigate it. It just creates a paper trail confirming you knew about it.

This is the pattern we see most often. And it’s understandable: manual testing of conversational AI doesn’t scale, and the alternatives haven’t always been accessible. But “we tested what we could” is not an assurance model. It’s a statement about the limits of your process, which becomes a liability when something goes wrong.

What adequate coverage actually looks like

Adequate coverage for a conversational AI assistant means:

- Testing full conversations, not individual prompts

- Covering the realistic range of user journeys your assistant will encounter

- Evaluating for context retention, grounding, persona consistency, and appropriate refusal across entire dialogues

- Running tests at sufficient scale that systematic failures surface before production

- Producing documented evidence that can be shared with stakeholders, auditors, or regulators

The good news is that this kind of coverage is now achievable without a large manual testing team. Automated conversation simulation, generating synthetic users that behave like real people across varied scenarios, makes it possible to test thousands of conversation paths in the time it would take a human to test dozens.

The calculus is simpler than it looks

A thorough AI testing programme is a known, bounded cost. A serious production incident, with its regulatory, reputational, and operational fallout, is an unbounded one.

Most teams that have been through a significant AI failure agree: they would have paid considerably more than the testing programme cost to have avoided it. The challenge is that this is obvious in retrospect and easy to discount in advance.

Testing your AI assistant properly isn’t a nice-to-have. It’s the thing that makes the investment worthwhile.